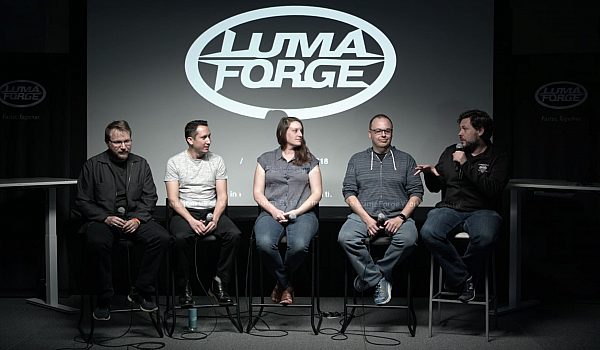

Have you ever wanted to edit from home? Tired of a consistently long commute? Need to edit in one location while shooting happens in another? Michael Kammes discusses the strengths and weaknesses of multiple remote collaboration tools for video editing.

This is what I'm presenting today. So ya wanna edit remotely? and you know, it was tough coming up with an actual title for this, because people thought I was gonna tell you how to copy stuff to a hard drive, and then take that home and edit. No, no, that's not what I'm talking about. This is what I'm talking about. This is how I like to edit remotely. This is me with my dog, she's so cute I miss her. That's Lucy, that's how I prefer to edit. Right, editing what you want, where you want, or doing what you do in your bay but doing it somewhere else. And I don't want to assume what you do in your own bay, but whatever you do in your own bay, now you can do it elsewhere.

So, what it is is traditional assembly, right? So you're doing your stringouts, you're putting your shots together, or your creative editorial. Basically, it's got a timeline. If it doesn't have a timeline, then that's not really what I'm gonna cover today, right? So this is just remote creative editorial. So what it's not, it's not review and approve. It's not uploading to things like Vimeo, or YouTube. It's not uploading things to Frame.io or Wiredrive. This is actually editing remotely while the media sits somewhere else. So, let me go over some of the challenges 'cause I think some folks kind of look over what some of those challenges are.

The first one, and usually this comes from business owners, is that "Am I gonna be outsourced?", right? The line I always hear, if you know Terry Curren from AlphaDogs, he loves talking about this. "Our jobs are gonna go to India!" No, no, that's not a concern to have right now, but that is a concern to have in the future. The tools we use, right? Because some people use Final Cut 10, I imagine a lot of people in this room are using Final Cut 10. Some people are using Avid, some people are using Premier, some people are the YouTube generation, they're still cutting with Vegas, because it works. Other challenges are security, all of you know about the hacks that have gone on, right? No one wants to be responsible for that media that gets hacked, or lost, or stolen, or uploaded. And also cost, as a business owner, does it make sense to invest in all this technology just to have someone edit at home, right?

If you already have that four wall facility, why would you pay for that extra infrastructure when they're coming in any way? You're already paying for HVAC, you're already paying for the power, you're already paying for the equipment, so what's the point? These are the two big ones though, and they involve a lot of math, which is why I like them.

First is latency, right? How many in here are gamers? How many people in here game? Okay. How many times do you hit the damn A button, you're like "I hit it, I hit it, why did I miss it?" Right, or you play Guitar Hero and you're like "I hit that, I hit that note", right? That's latency, right? You hit the button, and what it takes for the system to respond to that key press, that's latency, and the further you get from where the media is, that latency grows. That's a pain in the ass.

We also have fat media, right? We all saw ProRes RAW came out this week, right? Fantastic! Yeah, it's 100 or 150 megabytes a second. That's not a little piece of gristle to chomp through, right? That's a lot. So these are two problems that we have to address. Let me go into that a little bit deeper.

So we talk about fat media, right? The average US data rate for a home user was 64 megabits a second, right? That's your download speed. 15th fastest in the world. I would've thought we would've been higher that that, but, hey, aging infrastructure. Just so you can see what that means in terms of media. See that blue square up at the top? That's your max bandwidth, if you're in a good neighborhood without, you know, we're not talking Fios or Google Fiber, but we're talking 16 megabits a second. That's barely enough to chomp through a stream of H.264 coming off, like a 7D, or an XDCAM stream, right?

So you can imagine, if you're trying to do multicam, or you're trying to edit high-res, like ProRes RAW remotely, that's not going to fit down the pipes we currently have. The other one is latency, as I mentioned. The delay between a sent command and a perceived response. Here's a chart I love to show. Okay, so if we're looking at this map that I have on the screen now, you'll see that we have a data center here in New York City, but I'm actually across country in LA. It's 45 milliseconds, and that's if you have a pristine connection. If you start adding in that free modem you got from Comcast for paying 100 dollars a month, or that Netgear router you got from Best Buy or Fry's on discount, all those add levels of latency.

Also, depending on your connection, if you look at the left over here, we see T1, cable, DSL, cell, all of those add levels of latency. So that becomes compounded, right? Any of you that have credit card bills or know about compound interest, right? Same concept. So if you look down at the bottom, you'll see a good level of what latency, what metrics there are. Monitors usually add 40-70 seconds of latency, roughly, depending on what monitor, especially old plasmas. Under 50 milliseconds equals what I call a pleasurable editing experience. That means, when you hit JKL, there's responsiveness. Right?

When you have bad latency, you hit J, you wait, and it plays. Is anyone here a grizzled avid editor? Okay, adrenaline? Right, okay, you all know exactly what I talked about, you hit J and you wait. We don't want that. The statistic I love here is people say "Y'know we're going to be outsourced to other countries". Look at that latency right there, right, in the east. 230 milliseconds. And this is publicly available information. I think Verizon keeps a updated list quarterly of how fast your connection is from one continent to another, right, and I'll get into why this can be remedied in the future. So there's three different paradigms of remote editing. And I hope you like my drawings here, it's a little bit better than stick figures. We have cloud editing, which is actually editing in a browser, the proxies actually sit up in your cloud du jour or your data center, but it's outside the four walls of your facility.

Then we have our second paradigm, which we're actually have high-res sitting at your home base, at your mothership. You're streaming a proxy up to the cloud and then editing on your edit system at home, or at Starbucks, but the media is actually going through the cloud. And then the third one is something called PC-over-IP which I love, because it's acronyms and I sound smarter.

So, the first one is cloud editing. And all of you know the Golden Rule, right? So you'll see all my drawings have the Golden Rule on there, so you know which one's good, fast, and what's cheap, right? Just to help demystify things a little bit. So cloud-based editing. As I said, I'm defining it as proxy-based, either sitting in your data center or third-party data center. The tools are your browser, so you're editing in your browser.

There's two main solutions out there. One of them is ForScene; has anyone heard of ForScene? By the company called Forbidden, all right, pretty cool, the Olympics have used it, it's great for Preditors. And then there's Avid Media Central. So the pros of editing in a browser. It's commodity hardware, right? Because all you're using is the resources that the browser is using, and usually it's in Java. It's the same interface, right, so no matter where you go, it looks exactly the same. It can be really inexpensive, you're usually paying per month for access. It's perfect for Preditors who are doing stringouts. Right, everyone knows what a Preditor is? And I'm not talking Chris Hansen, I mean, like producer, editor, okay.

There are fueled workflows. Because it's a browser, you can upload media through your browser, right? So you can work remotely and upload media from your shoot. It also, if you talked about Forscene, interops with several NLEs. So you can actually export an XML and AAF and EDL, those of you who love your CMX-3600s, you can still do that, right? The cons are it's a limited toolset, right? All of you who are editors have spent tens of thousands of hours editing in your tool du jour. So how much would it suck to say, "Now do your job with a limited toolset with a tool "you haven't spent ten thousand hours on", right? That's a rub, no one really wants that. It's an unfamiliar interface, it's not the Avid, it's not the Final Cut 10, it's not the Premier that you're used to. And you still have to do an offline/online workflow, which is going to be around for a while, folks.

Don't let ProRes RAW fool you. Offline/online workflow will be around for quite a while. So the traditional workflow, if you hadn't guessed, as you're uploading your RAW files, or you use a server on-prem, it converts the files to H.264, you would then edit in a browser, and then you export your EDL, again, CMX-3600 if you're so inclined, and then conform in your NLE. So again, this is much more for Preditors and producers.

The second paradigm, which is what most people want, is the ability to have your high-res at your home base, excuse me, at your mothership, and then edit remotely at home or Starbucks. Okay, media's at your facility, and right now the solution, it's your favorite NLE, as long as it's Avid. And the solutions are Avid Media Composer, Cloud Remote, yes that's a name, and it requires MediaCentral. So this is using product formally known as InterPlay, this is using MediaCentral, all your media sits at home base and you sit at home with a copy of Media Composer, and you're logging in via the Media Composer application, with an extra plugin, and you're streaming media in real time inside the Avid Media Composer interface. There's a couple pros and cons, right?

You know Avid, right? Everyone here knows Avid? Yeah, some of you, okay. It's the same ecosystem, if you've cut in Avid, you know how to cut remotely in Avid 'cause you're using the same interface. Familiar with workflows? There's field workflows, you can actually edit with content that you've shot in the field, and it will upload in the background, and once it's done uploading, it'll instantly switch over and start editing the media that's now uploaded to the mothership. So that's pretty cool. It adds shared projects, which people love. The cons are the systems have to be homogenized.

Avid, as you know, is a stickler for having making sure your versions are tight, right? So you have to have the right OS, and the right copy of Media Composer, and the right driver version, and that can be a pain in the butt. You can edit anywhere, and I mean almost, because even though I mentioned Starbucks, you're not gonna get good throughput at a Starbucks, right? You need a robust connection. Got 150K? Yeah, it's not really inexpensive right now, unfortunately, so be prepared to mortgage your house. And the devil's in the details. This would require a full-time administrator, it's not something that you set it and forget it like a jellyfish, right? It's something that you actually need to have people administer.

So as I said, media is added to Media Central, this is usually a hard drive of media back at Media Central, it's uploaded into Media Central, formerly Interplay, you then do your editorial inside Media Composer Cloud Remote, and then, at some point when you're done with your edit, you go back to the mothership, or pass along your project file, and the online there. They re-conform back to the high-res. The third paradigm is something really cool that really hasn't made its way into the post-industry, except for Avid. You'd think Avid paid me to be here, they didn't. They didn't, trust me.

So, PC-over-IP is PC, personal computer over IP. How many here are familiar with TeamViewer? Right, it's kinda like that only it works. Right, PC-over-IP is remote-secured KVM, it's a window in to another system elsewhere, right? So it's like Remote Desktop, it's like GoToMyPC, only it's guaranteed quality. So in this case, the computer itself is sitting back at your facility along with your media. So that's where you would have your baller workstation. That's where you would have your Mac or your PC.

The tools? Your favorite NLE, because, remember, the system is sitting back at the mothership, it's not at your house. There are two solutions, there's a company called Teradici, which has it only for Windows, then there's a company called Amulet Hotkey, which I think is simultaneously the weirdest and most brilliant name ever. Amulet Hotkey, I have no idea what it means, but Amulet Hotkey takes the Teradici card and puts it in an external enclosure so you can use it on your Mac. As I said, there's some consumer solutions that are kind of like that, TeamViewer, GoToMyPC, VNC, those are all software-based. So here's kinda the paradigm. You have an external box that you're usually running a VM on, or maybe it's just a baller workstation. You have a card in there that goes to your IP network, it then goes across, over the interwebs and then back at your home base, you have a receiver that receives that video.

Those of you who ever did any work in the '70s or read history books, are familiar with the whole hub and spoke methodology, right? And the data center methodology where you had dumb terminals and then the whopper supercomputer, right? Everyone got the whopper joke, right? Right? Okay. So, as I mentioned, centralized technology, so it's one person to administer it. It's like you're there, because you are. You're looking into a window. Familiar workflows, the cons are there's no real field workflow, because remember, this is just a window into another computer. How do you copy media to that if you're remote, right? So it becomes kind of a rub.

There are such things as zero clients, which is a software window that you can run on a laptop that would look into the other system, but it's still only a window into another system. You can edit anywhere again, almost. You know, Starbucks probably won't cut it. And as I said, you can't do anything else remotely with the OS, because at that point, again, it's a window into another system. I had to do this, because people quite often tell me "Why can't I use GoToMyPC and use VNC?", right? Or TeamViewer. Because, you don't get sustained throughput, right, there's no quality of service there, color accuracy is a joke, frame accuracy, don't even try it.

I know some people who have finished an edit, and then they get a call while they're on vacation, and they're saying, "Look, you fat-fingered a lower third, can you remote in and change that?" Sure, you can do that. But at that point, that's not review and approve, that's making a change to something you already know. We have audio and video compression and sync, so again, your color's gonna suffer, your audio's gonna suffer, and your audio will most likely be out of sync, and latency is still an issue if you're across the country or across the globe. So those are the three methodologies.

However, there is something in the immediate future. Again, Avid. Avid is now spinning up Media Composer in the cloud, so you can use Teradici technology, and actually remote into a system that you're spinning up in Microsoft du jour. So you can grow and contract your edit, how many people are on your edit team, by spitting this up. I don't know when this is going to ship, they're telling me by the end of the year, I don't know. But this is what the future's going to be. How many of you ever go through Google and created a bucket? When you create a bucket on Google, you tell it what geography you want that bucket to be in. Why? Because that's the bucket that's close to where you are. That's a pain in the butt if you're uploading media to a bucket that's close by, but someone is editing remotely.

That means you have to create a bucket at every geography around the world, so whoever's editing has it close to them to cut down that latency. So the future. The future is proxies are gonna be generated by a user, or in the distant future when we have enough bandwidth, you'll upload high-res and the proxies will be created in the cloud. Proxy media will be distributed amongst all the data centers around the world, so no matter where you are, you're closest to where that data is. Editorial apps will understand web protocols. Right now, they don't. They understand local media or some media that's sitting on your NAS or SAN.

So understanding cloud protocols, or object-based media, would be imperative. I think first, you're gonna see a local app that runs in your system that points to the cloud, and later, it's going to be apps and SAS, which is what Media Composer is doing. And that's how you edit remotely, and that's how you can get ahold of me, and thank you so much for coming by.

Mobile

Mobile

Tower

Tower

R24

R24

Builder

Builder

Manager

Manager

Connect

Connect

Kyno

Kyno

Media Engine

Media Engine

Remote Access

Remote Access

Support

Support